🚀 Introduction

If you’ve ever wondered how tools like ChatGPT “understand” your questions, here’s the truth:

👉 They don’t understand words like humans do.

👉 They convert words into numbers.

These numbers are called embeddings, and they are the backbone of modern AI systems.

Without embeddings:

- No semantic search

- No recommendation systems

- No smart chatbots

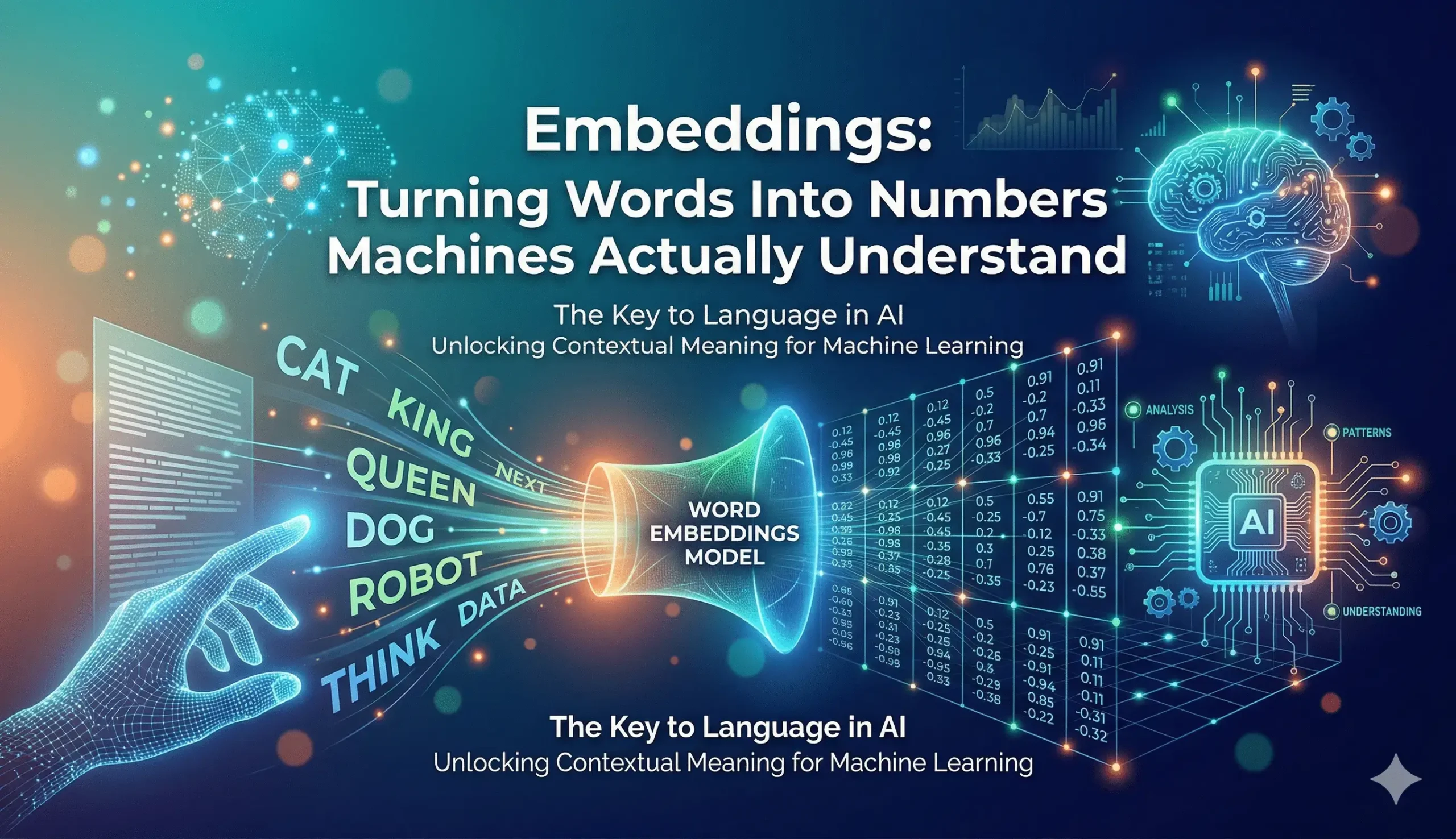

🔍 What Are Embeddings?

Embeddings are:

👉 Numerical representations of words, sentences, or data in vector form

Instead of storing:

"DevOps is powerful"AI converts it into something like:

[0.21, -0.45, 0.78, ...]Each word or sentence becomes a vector in multi-dimensional space.

🧠 Why Do We Need Embeddings?

Machines cannot understand:

- Language

- Meaning

- Context

They only understand:

👉 numbers and math

Embeddings help bridge that gap by:

- Capturing meaning

- Preserving relationships

- Enabling similarity comparison

📊 Simple Example (Important)

Words with similar meaning are placed closer in vector space:

- “King” ≈ “Queen”

- “DevOps” ≈ “Automation”

- “Dog” ≠ “Laptop”

👉 Distance between vectors = similarity

This is how AI knows:

- What’s related

- What’s not

⚙️ How Embeddings Work (Simplified)

Step 1: Input text

"Deploy app on Kubernetes"Step 2: Model processes it

(Using trained neural networks)

Step 3: Output vector

[0.12, 0.98, -0.33, ...]This vector captures:

- Meaning

- Context

- Intent

💻 Real-World Use Cases (This is where you win EEAT)

1. 🔎 Semantic Search

Instead of keyword matching:

Search:

“How to deploy app”

Can match:

“Kubernetes deployment guide”

2. 🤖 Chatbots (like your AI ideas)

- Understand intent

- Give relevant responses

3. 🧾 Recommendation Systems

- Netflix

- Amazon

👉 “You may also like” = embedding similarity

4. 📂 Log Analysis in DevOps

You can:

- Convert logs into embeddings

- Find similar errors

- Detect anomalies

👉 Huge productivity boost

🔥 Embeddings in DevOps (Practical Angle)

This is where you should stand out.

Example:

You have logs:

Error: Pod failed due to memory limitInstead of manual searching:

- Convert logs → embeddings

- Compare with previous issues

- Suggest fixes automatically

👉 Saves hours of debugging

🧪 Simple Code Example (OpenAI)

import OpenAI from "openai";

const openai = new OpenAI();

const embedding = await openai.embeddings.create({

model: "text-embedding-3-small",

input: "How to deploy Kubernetes app"

});

console.log(embedding.data[0].embedding);⚖️ Advantages of Embeddings

- Understand meaning, not just keywords

- Enable fast similarity search

- Power modern AI applications

- Improve automation

⚠️ Limitations (don’t skip this)

- Not perfect understanding

- Can misinterpret context

- Requires proper storage (vector DB)

- High-dimensional data → complex

🧠 Embeddings vs Keywords

| Feature | Keywords ❌ | Embeddings ✅ |

|---|---|---|

| Exact match | Yes | No |

| Meaning | No | Yes |

| Context | No | Yes |

| Flexibility | Low | High |

❓ FAQs

1. Are embeddings same as AI models?

No. They are output representations, not models themselves.

2. Do embeddings store full meaning?

Not perfectly, but they capture semantic similarity well.

3. Where are embeddings stored?

In vector databases like:

- Pinecone

- Weaviate

- Supabase

🏁 Conclusion

Embeddings are the foundation of modern AI.

👉 They convert human language into something machines can process

👉 They enable search, automation, and intelligence

If you’re working in DevOps or building AI tools:

👉 Understanding embeddings is not optional anymore.

💡 Pro Tip:

As per my experience:

“In my experience working in DevOps, embeddings can significantly reduce time spent on log analysis and debugging when combined with vector search tools.”