When you type “Build me an app” into ChatGPT, you probably think the AI is reading those 5 words. It’s not. AI doesn’t even have the concept of words — it reads something else entirely.

AI doesn’t read words. Words don’t exist for it. It reads something else entirely — tokens.

And these tokens decide everything:

- 🧠 How smart your AI is

- 📦 How much it can remember

- 💰 How big your bill is going to be

This is the most fundamental concept in AI engineering. Without understanding tokens, nothing else makes sense.

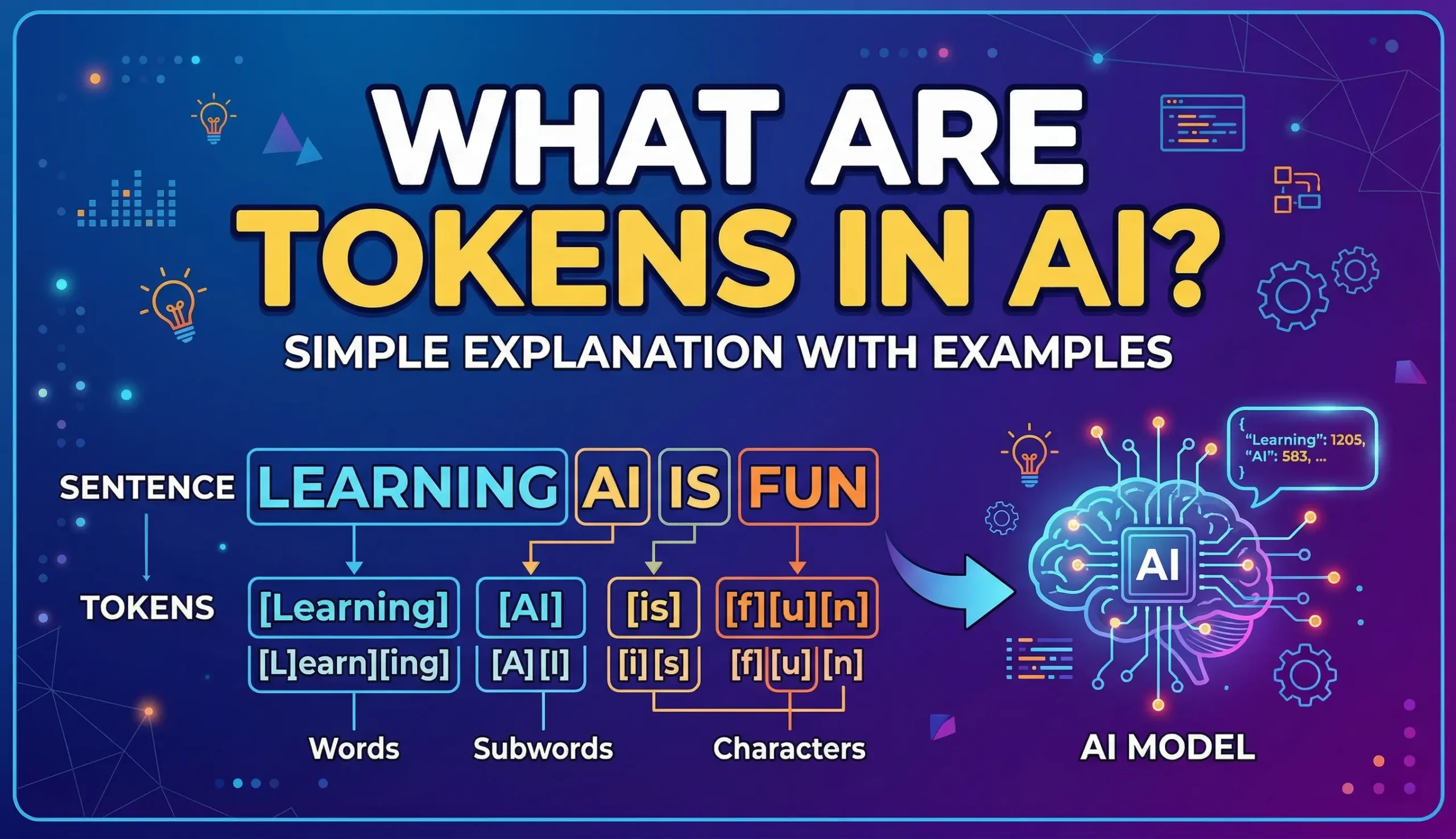

What Are Tokens?

Let’s look at it directly. OpenAI has a tokenizer tool — whatever text you type in, it shows you exactly how AI breaks it down.

Example: “Hello, how are you?”

5 words. 6 tokens. Simple so far.

Now try “I am building an application” — depending on the model, “application” might be 1 or 2 tokens. “Artificial Intelligence” — 2 tokens. Easy.

Here’s Where It Gets Interesting

Type the same thing in Hindi: “मुझे एक ऐप बनाना है”

Same meaning. But count the tokens — almost double or triple compared to English.

Bengali? Even more. Japanese? Almost every character becomes its own token.

Why non-English costs more

The tokenizer was trained on English text. English words are in its vocabulary. Hindi, Bengali, Japanese — the tokenizer has to break these down character by character. That’s why non-English languages use significantly more tokens for the same meaning.

How Was the Tokenizer Built? (BPE)

The algorithm behind tokenization is called BPE — Byte Pair Encoding. Sounds complex, but the concept is simple.

Step 1: Start with individual characters

Every letter is one token. h-e-l-l-o = 5 tokens.

Step 2: Find the most common pair

Look at which 2 characters appear together most often in the training text. For example, t and h appear together constantly — “the”, “that”, “this”, “they”, “with”. So merge th into a single token.

Step 3: Repeat

th and e appear together a lot? Merge them. Now the is one token.

Step 4: Keep going

ing→ one tokention→ one tokenHello→ one token

This process ran on billions of English text. That’s why common English words = 1 token. But Hindi words? The tokenizer barely saw any Hindi text during training. So it breaks बनाना down character by character.

GPT-4o’s vocabulary

GPT-4o has a vocabulary of ~200,000 tokens — 200,000 common subwords that it learned from training data. Mostly English. This is why English is inherently cheaper and more efficient to process.